This is the multi-page printable view of this section. Click here to print.

Ateliere Live 8.0.0 developer reference

- 1: System controller config

- 2: REST API v3

- 3: Rendering Engine config

- 4: Operational Control API

- 5: C++ SDK

- 5.1: Classes

- 5.1.1: Acl::AclLog::FileLocationFormatterFlag

- 5.1.2: Acl::AclLog::ThreadNameFormatterFlag

- 5.1.3: Acl::AlignedAudioFrame

- 5.1.4: Acl::AlignedFrame

- 5.1.5: Acl::ControlDataAddress

- 5.1.6: Acl::ControlDataCommon::ConnectionEvent

- 5.1.7: Acl::ControlDataCommon::Response

- 5.1.8: Acl::ControlDataCommon::StatusMessage

- 5.1.9: Acl::ControlDataSender

- 5.1.10: Acl::ControlDataSender::Settings

- 5.1.11: Acl::DeviceMemory

- 5.1.12: Acl::IControlDataReceiver

- 5.1.13: Acl::IControlDataReceiver::IRequest

- 5.1.14: Acl::IControlDataReceiver::RequestData

- 5.1.15: Acl::IControlDataReceiver::Settings

- 5.1.16: Acl::IMediaStreamer

- 5.1.17: Acl::IMediaStreamer::Configuration

- 5.1.18: Acl::IMediaStreamer::Settings

- 5.1.19: Acl::IngestApplication

- 5.1.20: Acl::IngestApplication::Settings

- 5.1.21: Acl::ISystemControllerInterface

- 5.1.22: Acl::ISystemControllerInterface::Callbacks

- 5.1.23: Acl::ISystemControllerInterface::Response

- 5.1.24: Acl::MediaReceiver

- 5.1.25: Acl::MediaReceiver::CustomSystemControllerCallResponse

- 5.1.26: Acl::MediaReceiver::NewStreamParameters

- 5.1.27: Acl::MediaReceiver::Settings

- 5.1.28: Acl::SystemControllerConnection

- 5.1.29: Acl::SystemControllerConnection::Settings

- 5.1.30: Acl::TimeCommon::TAIStatus

- 5.1.31: Acl::TimeCommon::TimeStructure

- 5.1.32: Acl::UUID

- 5.1.33: fmt::formatter< Acl::AudioChannelLayout >

- 5.1.34: fmt::formatter< Acl::ControlDataAddress >

- 5.1.35: fmt::formatter< Acl::ControlDataCommon::EventType >

- 5.1.36: fmt::formatter< Acl::FieldOrder >

- 5.1.37: fmt::formatter< Acl::ISystemControllerInterface::StatusCode >

- 5.1.38: fmt::formatter< Acl::SystemControllerConnection::ComponentType >

- 5.1.39: fmt::formatter< Acl::UUID >

- 5.2: Files

- 5.2.1: include/AclLog.h

- 5.2.2: include/AlignedFrame.h

- 5.2.3: include/Base64.h

- 5.2.4: include/ControlDataAddress.h

- 5.2.5: include/ControlDataCommon.h

- 5.2.6: include/ControlDataSender.h

- 5.2.7: include/DeviceMemory.h

- 5.2.8: include/IControlDataReceiver.h

- 5.2.9: include/IMediaStreamer.h

- 5.2.10: include/IngestApplication.h

- 5.2.11: include/IngestUtils.h

- 5.2.12: include/ISystemControllerInterface.h

- 5.2.13: include/MediaEnumerations.h

- 5.2.14: include/MediaReceiver.h

- 5.2.15: include/SystemControllerConnection.h

- 5.2.16: include/TimeCommon.h

- 5.2.17: include/UUID.h

- 5.3: Namespaces

- 5.3.1: Acl

- 5.3.2: Acl::AclLog

- 5.3.3: Acl::ControlDataCommon

- 5.3.4: Acl::IngestUtils

- 5.3.5: Acl::TimeCommon

- 5.3.6: fmt

- 5.3.7: spdlog

1 - System controller config

This page describes the configuration settings possible to set via the acl_sc_settings.json file.

| Expanded Config name | Config name | Description | Default value |

|---|---|---|---|

| psk | psk | The Pre-shared key used to authorize components to connect to the System Controller. Must be 32 characters long | "" |

| client_auth.enabled | enabled | Switch to enable basic authentication for clients in the REST API | true |

| client_auth.username | username | The username that will grant access to the REST API | “admin” |

| client_auth.password | password | Password that will grant access to the REST API | “changeme” |

| https.enabled | enabled | Switch to enable encryption of the REST API as well as the connections between the System Controller and the connected components | true |

| https.certificate_file | certificate_file | Path to the certificate file. In pem format | "" |

| https.private_key_file | private_key_file | Path to the private key file. In pem format | "" |

| logger.level | level | The level that the logging will produce output, available in ascending order: debug, info, warn, error | info |

| logger.file_name_prefix | file_name_prefix | The prefix of the log filename. A unique timecode and “.log” will automatically be appended to this prefix. The prefix can contain both the path and the prefix of the log file. | "" |

| site.port | port | Port on which the service is accessible | 8080 |

| site.host | host | Hostname on which the service is accessible | localhost |

| rate_limit.requests_per_sec | RateLimit.RequestsPerSec | The average number of requests that are handled per second, before requests are queued up. (Number of tokens added to the token bucket per second 1) | 300 |

| rate_limit.burst_limit | RateLimit.BurstLimit | The number of requests that can be handled in a short burst before rate limiting kicks in (Maximum number of tokens in the token bucket 1). | 10 |

| response_timeout | response_timeout | The maximum time of a request between the System Controller and a component before the request is timed out | 5000ms |

| cors.allowed_origins | allowed_origins | Comma-separated list of origin addresses that are allowed | ["*"] |

| cors.allowed_methods | allowed_methods | Comma-separated list of HTTP methods that the service accepts | [“GET”, “POST”, “PUT”, “PATCH”, “DELETE”] |

| cors.allowed_headers | allowed_headers | Comma-separated list of headers that are allowed | ["*"] |

| cors.exposed_headers | exposed_headers | Comma-separated list of headers that are allowed for exposure | [""] |

| cors.allow_credentials | allow_credentials | Allow the xKHR to set credentials cookies | false |

| cors.max_age | max_age | How long the preflight cache is stored before a new must be made | 300 |

| custom_headers.[N].key | key | Custom headers, the key of the header, e.g.: Cache-Control | None |

| custom_headers.[N].value | value | Custom headers, the value to the key of the header, e.g.: no-cache | None |

| allow_any_version | allow_any_version | Allow components of any version to connect. When set to false, components with another major version than the System Controller will be rejected | false |

2 - REST API v3

The following is a rendering of the OpenAPI for the Ateliere Live System Controller using Swagger. It does not have a backend server, so it cannot be run interactively.

This API is available from the server at the base URL /api/v3.

3 - Rendering Engine config

This page describes how to configure the Rendering Engine. This topic is closely related to this page on the control command protocol for the video and audio mixers.

Rendering Engine components

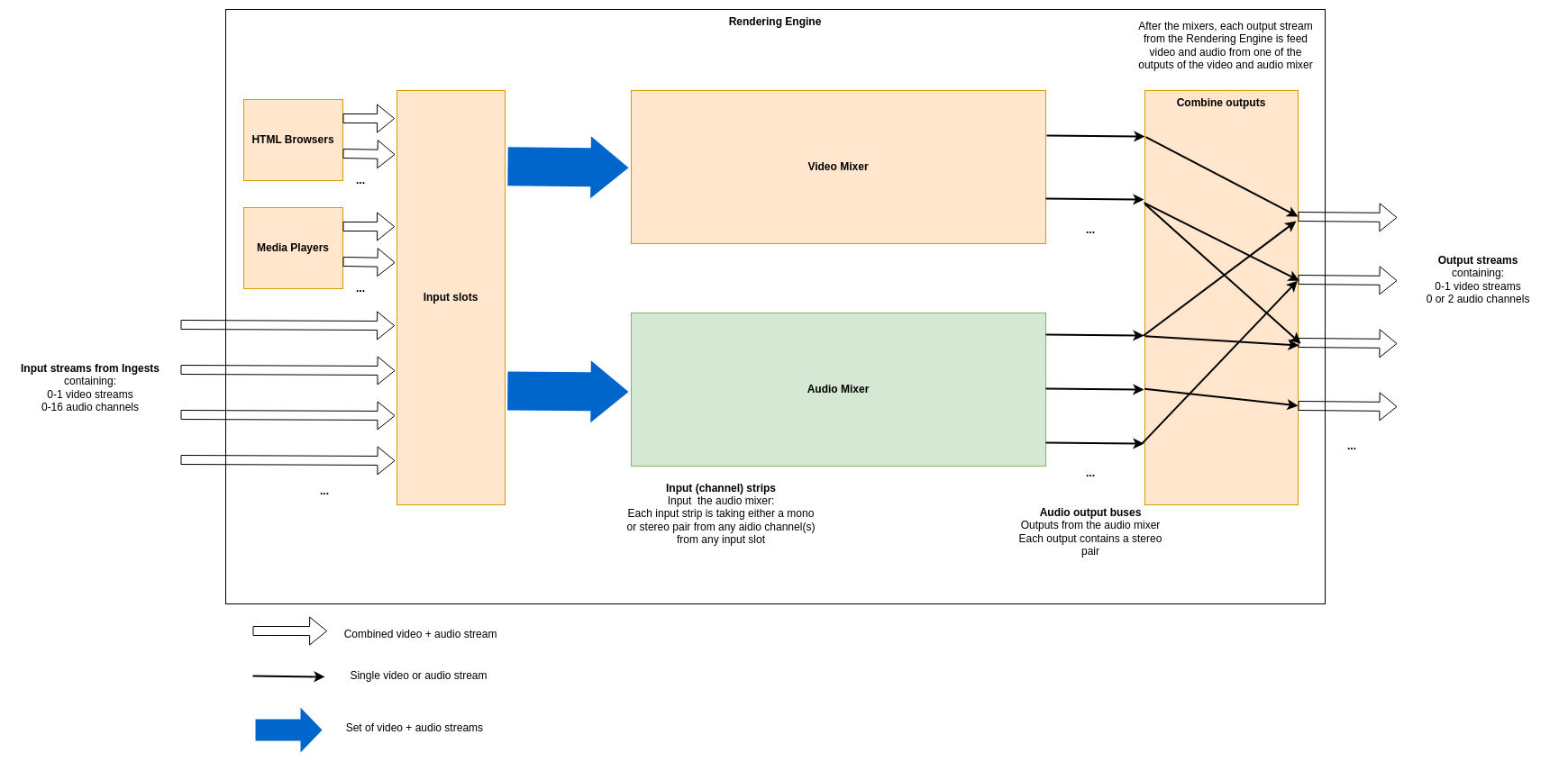

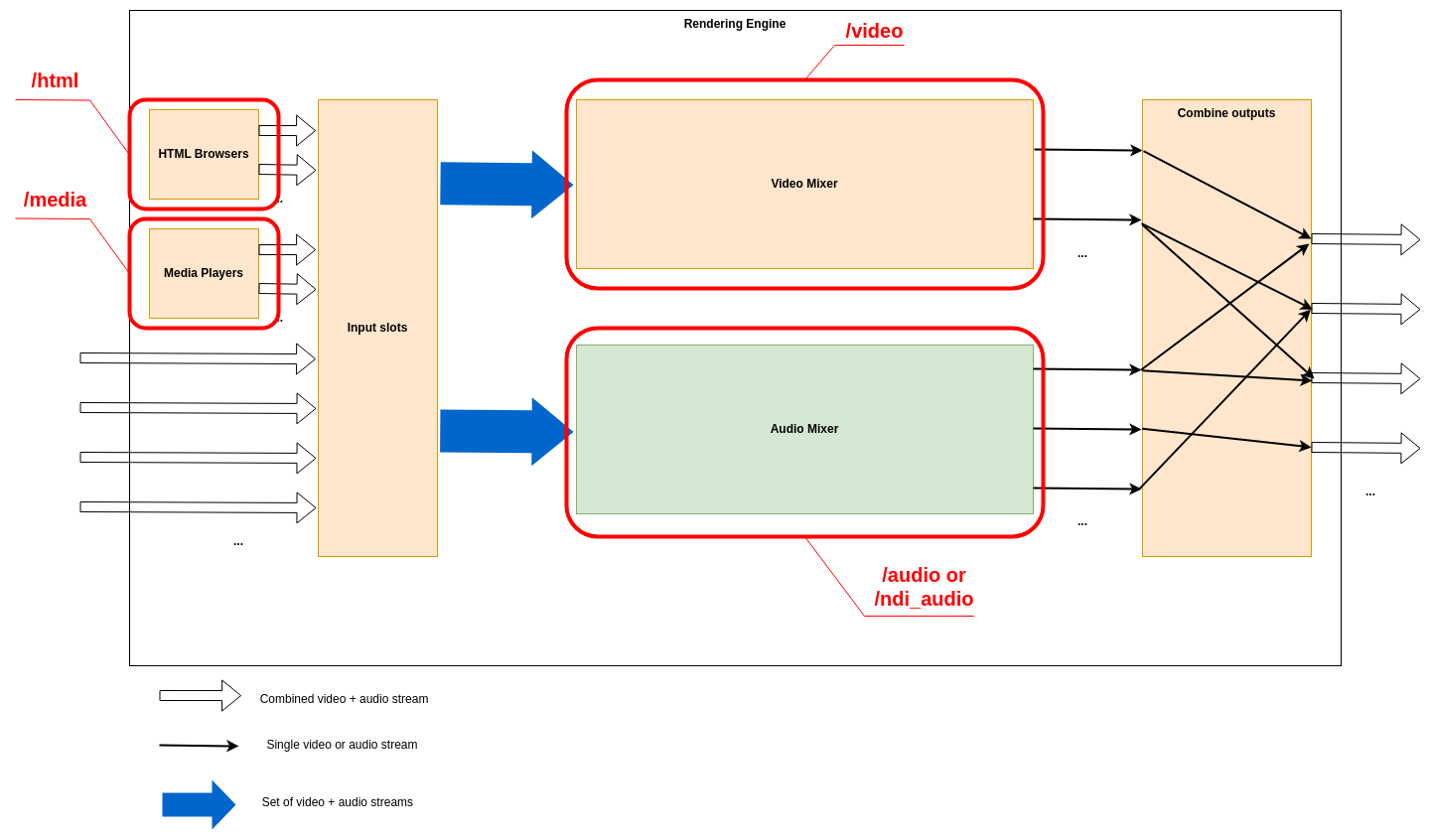

The Rendering Engine is an application that uses the Production Pipeline library in the base platform for transport, and adds to that media file playback, HTML rendering, a full video mixer and an audio mixer. The figure below shows a schematic of the different components and an example of how streams may transition through it.

HTML Renderers

Multiple HTML renderers can be instantiated in runtime by control panels, and in each an HTML page can be opened. Each HTML renderer produces a video stream, but no audio.

Media Players

Multiple media players can be instantiated in runtime by control panels, and in each a media file can be opened. Each media player produces a stream containing a video stream and all audio streams from the file.

Video Mixer

The Video mixer receives all video inputs into the system, i.e. streams from ingests, from HTML renderers and from media players. It outputs one or more named video output streams. The internal structure of the video mixer is defined at startup (as decribed in detail below), and controlling the video mixer is done in runtime by control panels.

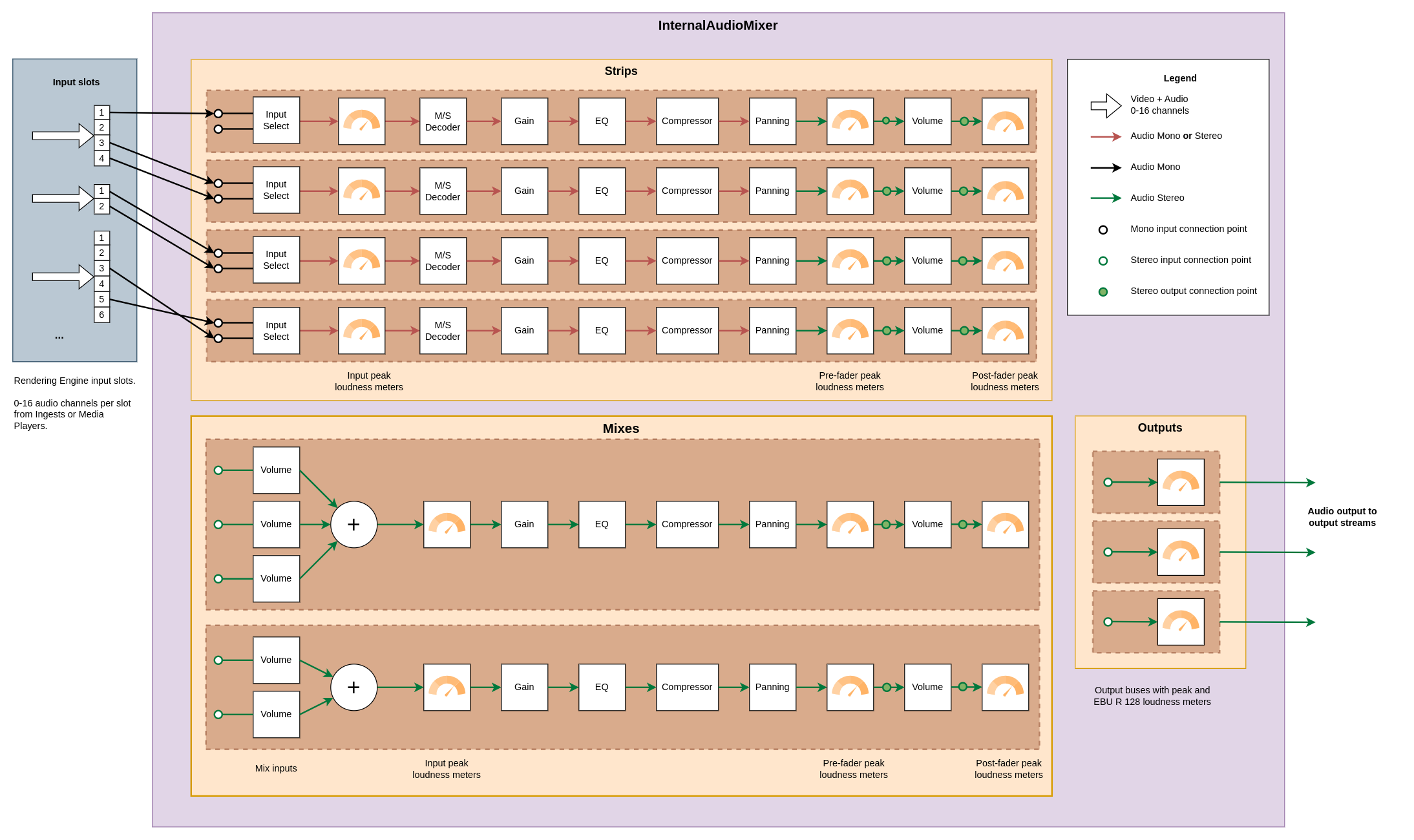

Audio Mixer

Note

The audio mixer can be either our internal audio mixer or an integration towards an external third party audio mixer. The rest of the documentation here is valid for the internal audio mixer.The audio mixer takes a number of mono or stereo pair streams as inputs that we call input strips. It outputs a number of named output streams in stereo. The outputs of the audio mixer are defined at startup, and the audio mixer is controlled in runtime by control panels.

Combine outputs

The combination of video and audio outputs into full output streams from the rendering engine is configured at startup.

Configuring the Rendering Engine

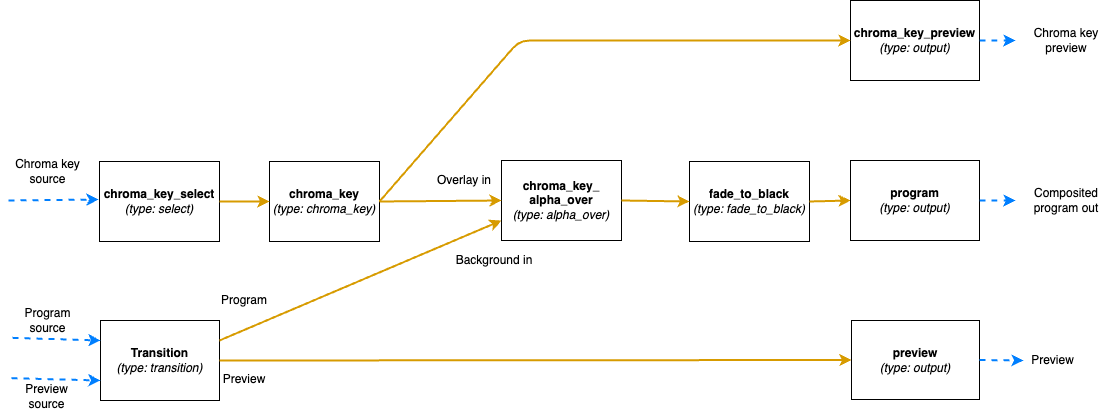

Some aspects of the rendering engine can be configured statically at startup through the use of a configuration file in JSON format. Specifically, the video mixer node graph, the audio mixer outputs and the combination of video and audio outputs can be configured. Exactly how the configuration file is specified at startup is covered in this guide.

As an example, here is the contents of such a JSON configuration file that we will refer to throughout this guide:

{

"version": "1.0",

"video": {

"nodes": {

"transition": {

"type": "transition"

},

"chroma_key_select": {

"type": "select"

},

"chroma_key": {

"type": "chroma_key"

},

"chroma_key_alpha_over": {

"type": "alpha_over"

},

"fade_to_black": {

"type": "fade_to_black"

},

"program": {

"type": "output"

},

"chroma_key_preview": {

"type": "output"

},

"preview": {

"type": "output"

}

},

"links": [

{

"from_node": "transition",

"from_socket": 0,

"to_node": "chroma_key_alpha_over",

"to_socket": 1

},

{

"from_node": "transition",

"from_socket": 1,

"to_node": "preview",

"to_socket": 0

},

{

"from_node": "chroma_key_select",

"from_socket": 0,

"to_node": "chroma_key",

"to_socket": 0

},

{

"from_node": "chroma_key",

"from_socket": 0,

"to_node": "chroma_key_alpha_over",

"to_socket": 0

},

{

"from_node": "chroma_key_alpha_over",

"from_socket": 0,

"to_node": "fade_to_black",

"to_socket": 0

},

{

"from_node": "fade_to_black",

"from_socket": 0,

"to_node": "program",

"to_socket": 0

},

{

"from_node": "chroma_key",

"from_socket": 0,

"to_node": "chroma_key_preview",

"to_socket": 0

}

]

},

"audio": {

"outputs": [

{

"name": "main",

"channels": 2

},

{

"name": "aux1",

"channels": 2

},

{

"name": "aux2",

"channels": 2

}

]

},

"output_mapping": [

{

"name": "program",

"video_output": "program",

"audio_output": "main",

"feedback_input_slot": 1001,

"stream": true

},

{

"name": "preview",

"video_output": "preview",

"audio_output": "",

"feedback_input_slot": 1002,

"stream": false

},

{

"name": "aux1",

"video_output": "program",

"audio_output": "aux1",

"feedback_input_slot": 0,

"stream": true

},

{

"name": "chroma_key_preview",

"video_output": "chroma_key_preview",

"audio_output": "aux2",

"feedback_input_slot": 100,

"stream": true

}

]

}

Video Mixer node graph

The video mixer is defined as a tree graph of nodes that work together in sequence to produce one or several video outputs. Each node performs a specific action, and has zero or more input sockets and zero or more output sockets. Links connect output sockets on one node to input sockets on other nodes. Each node is named and the name is used to control the node in runtime. The node tree configuration is specified in the video section of the JSON file, which contains two parts: the list of nodes and the list of links connecting the nodes.

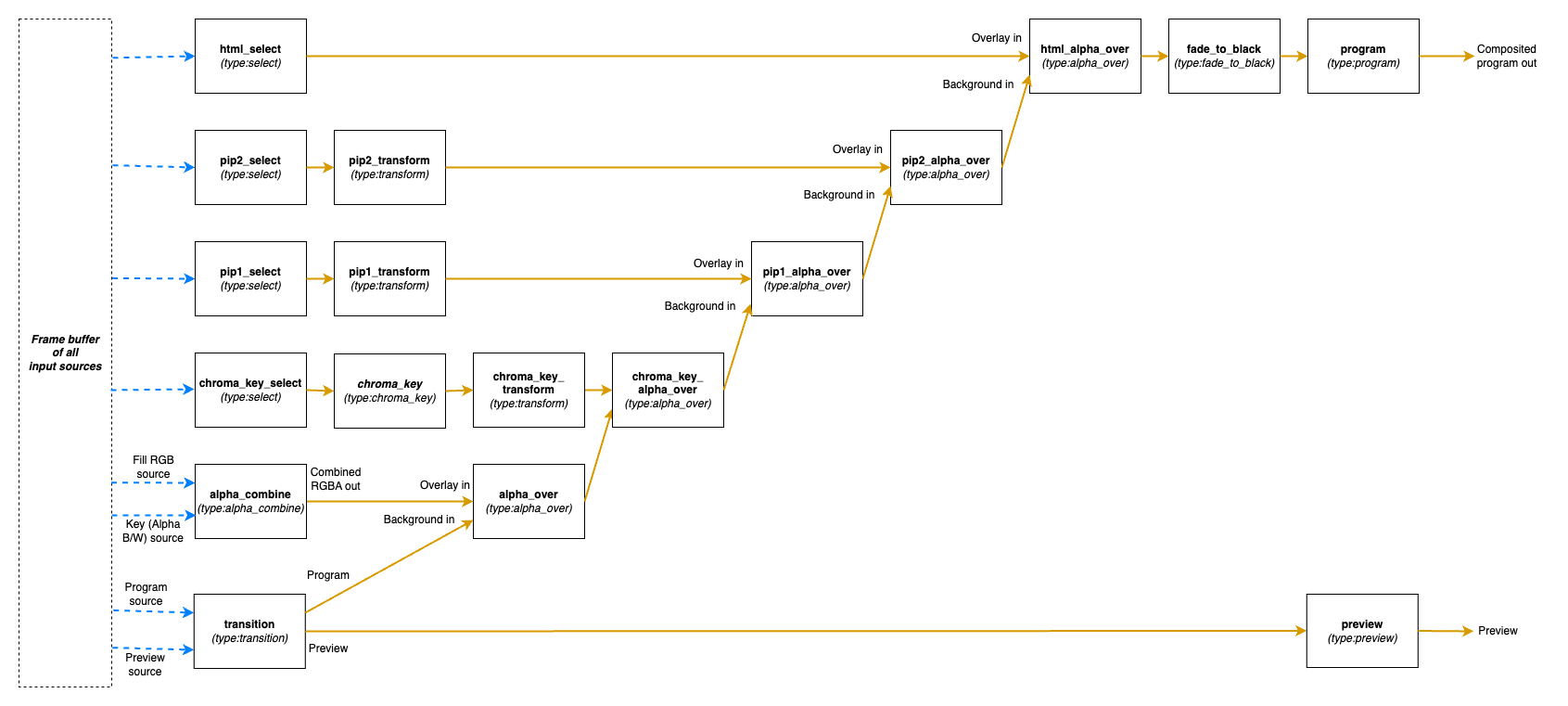

The following is a graphical representation of the video mixer node graph configuration in the JSON example file above, with two input nodes, three processing nodes and three output nodes, and links in between:

Video nodes block

The nodes object of the video block is a JSON object where the names/keys in the object is the unique name of the

node. The name is used to refer to the node when operating the mixer later on, so names that are easy to

understand what they are supposed to be used for in the production is recommended.

The name can only contain lower case letters, digits, _ and -. Space or other special characters are not allowed.

Each node is an JSON object with parameters defining the node properties.

Here the parameter type defines which type of node it is.

The supported types are listed below. They can be divided into three groups depending on if they provide input to the

graph, output from the graph or is a processing node, placed in the middle of the graph.

Input nodes

The input nodes take their input from the input slots of the Rendering Engine, which contains the latest frame for all connected sources (this includes connected cameras, HTML graphics, media players etc.) The nodes in this group does not have any input sockets, as they take the input frames from the input slots of the Rendering Engine. Which slot to take the frames from is dynamically controlled during runtime.

Alpha combine node (alpha_combine)

Input sockets: 0 (Sources are taken from the input slots of the mixer)

Output sockets: 1

The alpha combine node takes two inputs and combines the color from one of them with the alpha from the other one. The

node features multiple modes that can be set during runtime to either pick the alpha channel of the second video input,

or to take any of the other R, G and B channels, or to average the RGB channels and use as alpha for the output.

This node is useful in case videos with alpha is provided from SDI sources, where the alpha must be sent as a separate

video feed and then combined with the fill/color source in the mixer.

Select node (select)

Input sockets: 0 (Source is taken from the input slots of the mixer)

Output sockets: 1

The select node simply selects an input slot from the Rendering Engine and forwards that video stream.

Which input slot to forward is set during runtime.

The node is a variant of the transition node, but does not support the transitions supported by the transition node.

Transition node (transition)

Input sockets: 0 (Sources are taken from the input slots of the mixer)

Output sockets: 2 (One with the program output and one with the preview output)

The transition node takes two video streams from the Rendering Engine’s input slots, one to use as the program and one

as the preview output stream.

These are output through the two output sockets.

During runtime this node can be used to make transitions such as wipes and fades between the selected program and

preview.

Output nodes

This group only contains a single node, used to mark a point where processed video can exit the graph and be used in output mappings.

Output node (output)

Input sockets: 1

Output sockets: 0 (Output is sent out of the video mixer)

The output node marks an output point in the video mixer’s graph, where the video stream can be used by the output

mapping to be included in the Rendering Engine’s Output streams, or as streams to view in the multi-viewer.

The node takes a single input and makes it possible to use that video feed outside the video mixer.

Output nodes can be used both to output the program and preview feeds of a video mixer, but also to mark auxiliary

outputs, as in the example above, where chroma_key_preview is output to be included in the graph to be able to view

the result of the chroma keying, without the effect being keyed on to the program output.

Processing nodes

The processing nodes take their input from another node’s output and outputs a result that is sent to another node’s input. They are therefore placed in the middle of the graph, after nodes from the input group and before output nodes.

Alpha over node (alpha_over)

Input sockets: 2 (Index 0 for overlay video and index 1 for background video)

Output sockets: 1

The alpha over node composites the overlay video input on top of the background video input. The alpha of the overlay

video input is taken into consideration. During runtime, this node can be controlled to show or not to show the overlay,

and to fade the overlay in or out. This node is useful to composite things such as graphics or chroma keyed video onto a

background video.

Chroma key node (chroma_key)

Input sockets: 1

Output sockets: 1

The chroma key node takes an input video stream and performs chroma keying on it based on parameters set during runtime.

The video output will have the alpha channel (and in some cases also the color channels) altered. The result of this

node can then be composited on top of a background using an Alpha over node.

Crop node (crop)

Input sockets: 1

Output sockets: 1

The crop node takes an input video stream and crops the video based on parameters set during runtime. The parts of the video outside of the cropped area will be fully transparent.

Fade to black node (fade_to_black)

Input sockets: 1

Output sockets: 1

The fade to black node takes a single input video stream and can fade that video stream to and from black.

This node is normally used as the last node before the main program output node, to be able to fade to and from black at

the beginning and end of the broadcast.

Transform node (transform)

Input sockets: 1

Output sockets: 1

The transform node takes an input video stream and transform the result inside the visible canvas. This node can be

configured during runtime to scale and move the input video. This node is useful for picture-in-picture effects, or to

move a chroma key node output to the lower corner of the frame.

Video delay node (video_delay)

Input sockets: 1

Output sockets: 1

The video delay node is used to delay the video by a given number of frames. The number of frames to delay is controlled

during runtime. This node is useful whenever the need for dynamically delay a video stream arises, for example in

case an external audio mixer is used, which comes with a delay of some frames.

Video links block

The links array in the video block is a list of links between video nodes. The video frames are fed in one direction

from node to node via these links.

An input socket on a node can only have one connected link.

The output sockets on a node can have multiple connected links.

Each block contains the following keys:

from_node- The name of the node in thenodesobject, from which the link is receiving frames fromfrom_socket- The index of the output socket in the node from which this link originatesto_node- The name of the node in thenodesobject, to which the link is sending frames toto_socket- The index of the input socket in the node to which this link connects

Some examples

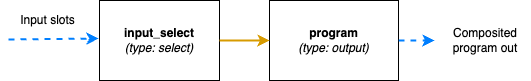

The simplest video mixer node graph imaginable would be a select node feeding an output node. This mixer would only be able to select one of the inputs and output it unaltered, like a video router would do:

The JSON file section for this is:

"video": {

"nodes": {

"input_select": {

"type": "select"

},

"program": {

"type": "output"

}

},

"links": [

{

"from_node": "input_select",

"from_socket": 0,

"to_node": "program",

"to_socket": 0

}

]

}

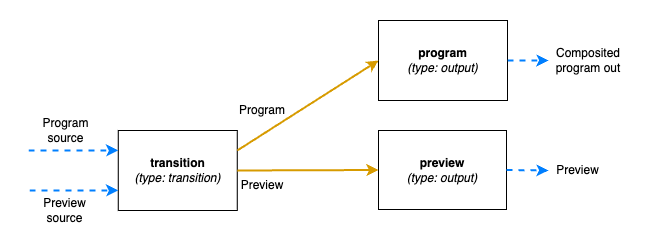

A slightly more advanced node graph would be to use a transition node and two outputs, one for the program out and one for the preview:

The JSON file section for this is:

"video": {

"nodes": {

"transition": {

"type": "transition"

},

"program": {

"type": "output"

},

"preview": {

"type": "output"

}

},

"links": [

{

"from_node": "transition",

"from_socket": 0,

"to_node": "program",

"to_socket": 0

},

{

"from_node": "transition",

"from_socket": 1,

"to_node": "preview",

"to_socket": 0

},

]

}

Audio outputs

The audio block of the configuration file defines the properties of the audio mixer.

The JSON object contains a list called outputs which lists the outputs of the audio mixer and the configuration of

each output.

Each output has these parameters:

name- The unique name of this audio output, used to refer to if from theoutput_mappingblockchannels- The number of audio channels of this output

The input routing and configuration of the strips is made via the control command API.

Combine outputs

The output_mapping block is used to define outputs of the entire Rendering Engine, by combining outputs from the video

and audio mixers and configuring where those should be sent.

The output mapping is an array of output mappings. Each mapping has the following parameters:

name- The unique name of the output mapping. This will be displayed in the REST API both for sources being streamed and sources with feedback streams that can be included in the multi-viewvideo_output- The name of the video mixer node of typeoutputto get the video stream from. Leave empty, or omit the key to create an audio-only outputaudio_output- The name of the audio output to get the audio stream from. Leave empty, or omit the key to create a video-only outputfeedback_input_slot- The input slot to use to feed this output back to the multi-view. The REST API may then refer to this input slot to include this output in a multi-view view. Value must be >= 100 as input slots up to 99 are reserved for “regular” sources. Use 0 to disable feedback of this stream.stream- Boolean value to tell if the output should be streamable and visible as an output in the REST API. If set to false the output will not turn up as anPipeline Outputin the REST API.

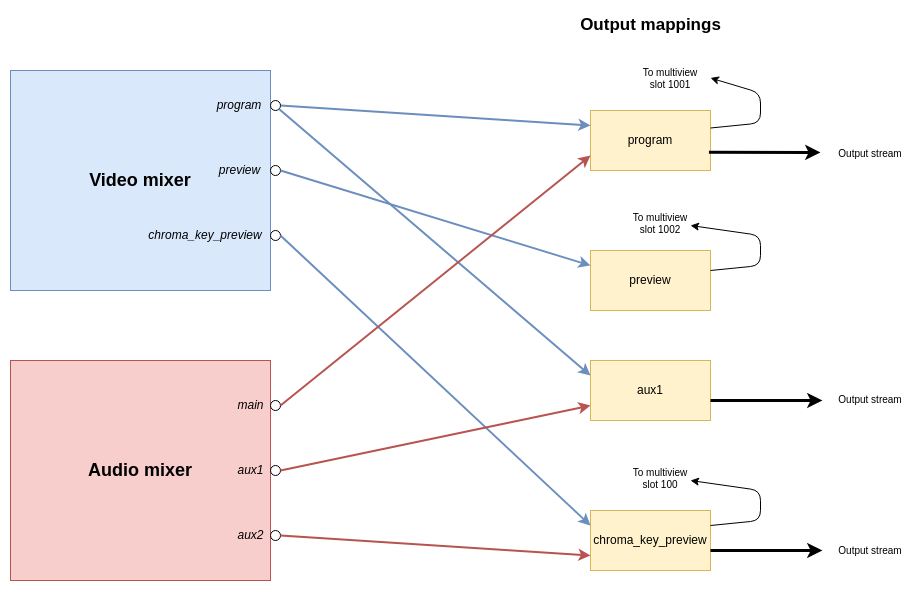

The following is a graphical visualisation of the output mappings in the JSON example configuration file above:

The default Rendering Engine config

If no Rendering Engine configuration file has been supplied by the user, a default configuration will be used. The following is the video node graph in the default configuration:

The full JSON for this default configuration is:

{

"version": "1.0",

"video" : {

"nodes":{

"transition": {"type":"transition"},

"alpha_combine": {"type":"alpha_combine"},

"chroma_key_select": {"type":"select"},

"chroma_key": {"type":"chroma_key"},

"chroma_key_transform": {"type":"transform"},

"pip1_select": {"type":"select"},

"pip1_transform": {"type":"transform"},

"pip2_select": {"type":"select"},

"pip2_transform": {"type":"transform"},

"html_select": {"type":"select"},

"alpha_over": {"type":"alpha_over"},

"chroma_key_alpha_over": {"type":"alpha_over"},

"pip1_alpha_over": {"type":"alpha_over"},

"pip2_alpha_over": {"type":"alpha_over"},

"html_alpha_over": {"type":"alpha_over"},

"fade_to_black": {"type":"fade_to_black"},

"program": {"type":"output"},

"preview": {"type":"output"}

},

"links":[

{"from_node":"transition", "from_socket":0, "to_node":"alpha_over", "to_socket":1},

{"from_node":"alpha_combine", "from_socket":0, "to_node":"alpha_over", "to_socket":0},

{"from_node":"alpha_over", "from_socket":0, "to_node":"chroma_key_alpha_over", "to_socket":1},

{"from_node":"chroma_key_select", "from_socket":0, "to_node":"chroma_key", "to_socket":0},

{"from_node":"chroma_key", "from_socket":0, "to_node":"chroma_key_transform", "to_socket":0},

{"from_node":"chroma_key_transform", "from_socket":0, "to_node":"chroma_key_alpha_over", "to_socket":0},

{"from_node":"chroma_key_alpha_over", "from_socket":0, "to_node":"pip1_alpha_over", "to_socket":1},

{"from_node":"pip1_select", "from_socket":0, "to_node":"pip1_transform", "to_socket":0},

{"from_node":"pip1_transform", "from_socket":0, "to_node":"pip1_alpha_over", "to_socket":0},

{"from_node":"pip1_alpha_over", "from_socket":0, "to_node":"pip2_alpha_over", "to_socket":1},

{"from_node":"pip2_select", "from_socket":0, "to_node":"pip2_transform", "to_socket":0},

{"from_node":"pip2_transform", "from_socket":0, "to_node":"pip2_alpha_over", "to_socket":0},

{"from_node":"pip2_alpha_over", "from_socket":0, "to_node":"html_alpha_over", "to_socket":1},

{"from_node":"html_select", "from_socket":0, "to_node":"html_alpha_over", "to_socket":0},

{"from_node":"html_alpha_over", "from_socket":0, "to_node":"fade_to_black", "to_socket":0},

{"from_node":"fade_to_black", "from_socket":0, "to_node":"program", "to_socket":0},

{"from_node":"transition", "from_socket":1, "to_node":"preview", "to_socket":0}

]

},

"audio" : {

"outputs": [

{"name": "main", "channels": 2}

]

},

"output_mapping" : [

{"name":"program", "video_output":"program", "audio_output":"main", "feedback_input_slot":1001, "stream":true},

{"name":"preview", "video_output":"preview", "feedback_input_slot":1002, "stream":false}

]

}

4 - Operational Control API

Ateliere Live Control API.

4.1 - Overview of JSON protocol

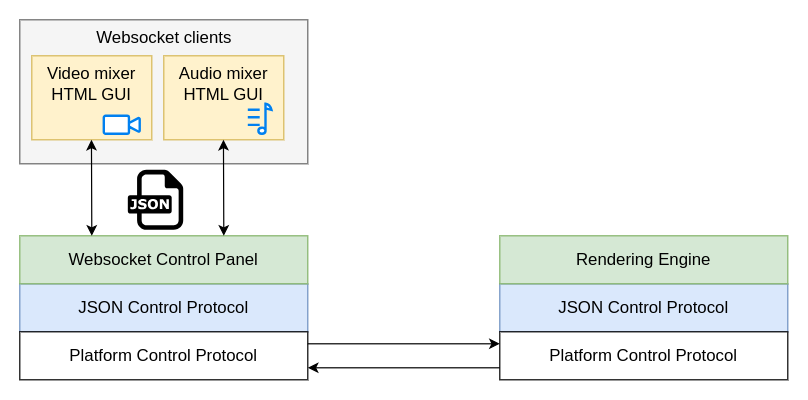

This is a description of the network protocol used to control, monitor and explore the components in a running Ateliere Live production.

The JSON protocol is used when connected to the Websocket Control Panel

(acl-websocketcontrolpanel) and has multi-client support, making it

possible for clients that are connected to the same production to mirror

each other’s controls.

The JSON protocol sits on top of an application-independent platform protocol which transports the JSON payload between the clients and the production.

Control Panel implementation

Note: This section is mainly needed if you plan on implementing a new control panel for the Atelier Live Rendering Engine using the C++ SDK.

Most of the JSON messages described in this document are sent and received by the Websocket Control Panel using the ControlDataSender interface. The interface encapsulates the packing and unpacking of messages to and from the application-agnostic platform protocol. The platform protocol includes the addresses for the sender and receiver(s) of the messages but is unaware of the contents of the packages.

The ControlDataSender interface defines the callbacks that should be used for

handling messages coming from the production.

Response and status messages are received from the production by setting the

mResponseCallback and mStatusMessageCallback, respectively. The data

received in these callbacks are complete JSON messages conforming to the

specification in this document and can be forwarded without changes to the

connected clients. There are however some exceptions to this.

Events

Messages of the type event currently signals that a connection was opened or

closed in the production. Event messages originate in the control panel meaning

that implementing support for this message type is part of making a control

panel using the C++ SDK.

The control panel gets the events from the underlying platform through the

ControlDataSender::Settings::mConnectionEventCallback. By inspecting the

ConnectionEvent provided in the callback, clients connected to the control

panel can be informed about the event. This can be used by other control

panels, implemented using the C++ SDK.

Please see the section about the event message type under “Message types” for

information about the message syntax.

Hop count

Messages of different types need to propagate to different depth of the

production. A hop count in each message determines if it will be relayed

from one Rendering Engine to a possibly chained one behind it.

When receiving a JSON message from a client, the Websocket Control Panel will select a hop count based on the content of the message, before sending the message to the production.

The hop count is marked in the platform layer of the protocol when sending a

message using ControlDataSender::sendRequestToReceivers. It is not visible

in the JSON message.

For each type of message, the hop count used by the Websocket Control Panel is specified in a “Hop count” section within “Message types” below. This hop count should be used when implementing a new C++ control panel.

The state tree

The control API is based around a state tree that makes up the hierarchy of parameters and entities in the Rendering Engine that can be controlled or monitored. The tree is expressed as a JSON structure.

An entity in the tree is addressed by a path consisting of the names of each enclosing object surrounding the entity.

For instance, in the structure below, the path to the g-field of the

rgb_filter would be /video/nodes/rgb_filter/g:

{

"video": {

"nodes": {

"rgb_filter": {

"r": 0.5,

"g": 0.4,

"b": -0.2

}

}

}

}

Message format

JSON syntax is used for all messages. The type and resource fields are

present in all messages and define the type of message and what resource that

is affected by this message.

{

"type": "...",

"resource": "..."

}

Message types

All changes that can be made to a production are available in the JSON API

through messages of type set or command.

A parameter that can be changed immediately, without side effects, and that

will keep its state until the next change can be accessed directly with a

set message. In other cases a command is necessary to initiate the change.

A set message might for instance be used for controlling the layer opacity

in an alpha-over node or the gain for an input to the audio mixer whereas a

command is needed to initiate the loading of a media file or a transition

effect spanning over a period of time.

To get the current state of a resource, a get message can be used. A resource

can also be monitored for changes using a subscribe message. When a

sub-resource is added or removed from the monitored resource, a state-add or

state-remove message is sent to the subscriber. Other changes result in a

state-change message.

Parameters that change continuously without any user input, such as the play

position of a media player or the loudness in an audio output, are referred to

as streaming parameters. Streaming parameters are monitored by sending a

sampling-start message to the resource path of the streaming parameter and

providing a time interval stating how often sampling-update messages should

be sent.

Table of message types

| Message type | Description |

|---|---|

get | Get the value of a resource |

get-response | The response to a get message |

set | Change the value of a resource |

set-response | The response to a set message |

subscribe | Subscribe to state changes within a resource |

subscribe-response | The response to a subscribe message |

unsubscribe | Stop monitoring state changes within a resource |

unsubscribe-response | The response to an unsubscribe message |

command | Execute a command in a resource |

command-response | The response to a command message |

state-change | Describes the new state of a resource |

state-add | A sub-resource was added to a resource |

state-remove | A sub-resource was removed from a resource |

subscription-list | List own subscriptions |

subscription-list-response | The response to a subscription-list-response message |

describe | Get a description of a resource |

describe-response | The response to a describe message |

sampling-start | Start sampling a streaming parameter |

sampling-start-response | The response to a sampling-start message |

sampling-stop | Stop sampling a streaming parameter |

sampling-stop-response | The response to a sampling-stop message |

sampling-list | List own streaming parameter subscriptions |

sampling-list-response | The response to a sampling-list message |

sampling-list-all | List all streaming parameter subscriptions |

sampling-list-all-response | The response to a sampling-list-all message |

sampling-update | Describes the latest sampled state of a streaming parameter |

event | Various information. Only sent to clients of the Websocket Control Panel |

All response messages contain the field timestamp. This is the time when the

request message was handled, i.e. the timestamp of the frame processed on that

time.

Each type of message is described in detail below.

get and get-response

A message of type get is used to retrieve the current state of a given

resource within the production.

Required fields for get messages:

| Parameter | Description |

|---|---|

| type | Must be “get” |

| resource | The path to get the current state from |

For instance:

{

"type": "get",

"resource": "/video/nodes"

}

The response is a get-response. A successful response contains a body

object with the current state of the resource:

{

"type": "get-response",

"resource": "/video/nodes",

"timestamp": 1731073800720000,

"body": {

...

}

}

The body has its root where the resource URI points.

An unsuccessful get has no body and contains an error message, for

instance:

{

"type": "get-response",

"resource": "/video/nodes",

"error": "No such resource: /video/nodes",

"timestamp": 1731073800720000

}

NOTE: Repeatedly calling get is a very inefficient way of monitoring the

state of a production. Please see the subscribe and state-change message

sections for a better way.

Hop count

In a proxy-editing setup, i.e. when there is one pipeline behind another one,

get messages will stop at the first one and the state for that pipeline

will be returned. This is applicable when using a Websocket Control Panel.

When implementing a new control panel using the C++ SDK, set the hop count

to 1 (one) for get messages.

set and set-response

A message of type set is used to change the state of one or more components

located at a specified resource path in the production. This can for instance

be to change the currently selected video source of a transition node or to

change the gain of an audio strip.

set messages are generally only available for changes which are immediate.

Please see the command message section for details about changes that

occur over a period of time.

Required parameters for set messages:

| Parameter | Description |

|---|---|

| type | Must be “set” |

| resource | The path to apply the changes to |

| body | The set of changes to apply |

The body of a set request contains the new values of the fields to change

within the resource path. The structure of the body does not need to be

complete - only the fields that should be updated need to be included.

Example of a set request that changes program and preview within a

node named my_transition_node:

{

"type": "set",

"resource": "/video/nodes/my_transition_node",

"body": {

"preview": 3,

"program": 8

}

}

The body does not need to be an object when setting a single item, just the

value. Here is an example:

{

"type": "set",

"resource": "/audio/strips/1/filters/gain/value",

"body": 8.6

}

The set-response does not contain a body with the set values - instead changes

in the production need to be monitored by subscribing to the affected

resources.

Please see the subscribe message section for details about subscriptions.

If there is an error, a set-response is returned containing an error

message. In this case none of the parameters in the

body of the set message will be applied. If the set was successful the

response will contain a result ok key/value.

Hop count

In a proxy-editing setup, i.e. when there is one pipeline behind another one,

set messages will propagate all the way to the last pipeline while following

the configured alignment delay of each step. This is applicable when using a

Websocket Control Panel.

When implementing a new control panel using the C++ SDK, set the hop count

to -1 (negative one) for set messages.

command and command-response

Commands are used to initiate events in the production which are not immediate

or that do not have a direct relationship to one specific field of the

production state tree. For instance, when starting playback of a media file

there might be a play command. This will start the actual playback but also

change the player state (e.g. from paused to playing) and also start to

periodically update the current playback position field.

Required parameters for command messages:

| Parameter | Description |

|---|---|

| type | Must be “command” |

| resource | The resource receiving the command |

| body/command | The name of the command to execute |

| body/parameters | The set of parameters to the command. If the command takes no parameters, this can be skipped, or passed empty |

Example of a command that starts a fade command on a transition node:

{

"type": "command",

"resource": "/video/nodes/my_transition_node",

"body": {

"command": "fade",

"parameters": {

"duration_ms": 120

}

}

}

Example of a command that takes no parameters:

{

"type": "command",

"resource": "/video/nodes/my_transition_node",

"body": {

"command": "cut"

}

}

As for set-response messages, the command-response has no body - instead changes in

the production need to be monitored by subscribing to one or more resources.

Please see the subscribe message section for details about subscriptions.

If there is an error, a command-response is returned containing an error

message. If the command was successful the response will contain a result

ok key/value.

Hop count

In a proxy-editing setup, i.e. when there is one pipeline behind another one,

command messages will propagate all the way to the last pipeline while

following the configured alignment delay of each step. This is applicable when

using a Websocket Control Panel.

When implementing a new control panel using the C++ SDK, set the hop count

to -1 (negative one) for command messages.

subscribe and subscribe-response

Subscriptions are used to monitor changes in the production. This can e.g. be useful in order to mirror the controls of different control surfaces that are connected to the same production, detect when new resources appear, or to otherwise visualize different parts of the system.

Required parameters for subscribe messages:

| Parameter | Description |

|---|---|

| type | Must be “subscribe” |

| resource | The path to monitor for changes |

Example of a subscribe message for tracking changes of the resource

/audio/strips/3/compressor:

{

"type": "subscribe",

"resource": "/audio/strips/3/compressor"

}

The response is a subscribe-response. A successful response contains a body

object with the current state of the resource, for instance:

{

"type": "subscribe-response",

"resource": "/audio/strips/3/compressor",

"body": {

"attack": 30,

"gain": 1,

"knee": 3.5,

"ratio": 3.3,

"release": 2000,

"threshold": -24,

"type": "compressor"

},

"timestamp": 1731073800720000

}

If there is an error, a subscribe-response is returned containing an error

message instead of a body.

Whenever a monitored resource changes, a state-change, state-add or

state-remove message is sent to all subscribers of that resource. Please see

the state-change, state-add and state-remove message sections for details

about this.

Websocket Control Panel

In a proxy-editing setup, i.e. when there is one pipeline behind another one,

subscribe messages will stop at the first one and it is from this pipeline

that state changes will be reported. This is applicable when using a Websocket

Control Panel.

When implementing a new control panel using the C++ SDK, set the hop count

to 1 for subscribe messages.

unsubscribe and unsubscribe-response

To stop receiving updates when a monitored resource changes, an unsubscribe

message can be used.

Required parameters for unsubscribe messages:

| Parameter | Description |

|---|---|

| type | Must be “unsubscribe” |

| resource | The resource path to stop monitor for changes |

If the unsubscribe message is successful, an unsubscribe-response is

returned:

{

"type": "unsubscribe-response",

"resource": "/audio/strips/3/compressor",

"timestamp": 1731073800720000

}

If there is an error, an unsubscribe-response is returned containing an

error message instead of a body.

Hop count

In a proxy-editing setup, i.e. when there is one pipeline behind another one,

unsubscribe messages will stop at the first receiver of the message. This is

applicable when using a Websocket Control Panel.

When implementing a new control panel using the C++ SDK, set the hop count

to 1 for unsubscribe messages.

state-change

A state-change message describes the new state for a number of fields within

a specified resource. state-change messages are sent from the production

side, i.e. they are only received, never sent, by clients.

state-change messages are sent whenever the internal state of the production

changes, for instance when:

- a client has issued a

setmessage - a command that changes the state is being executed

- an automation changes the state over a period of time

- a component, such as a metering device, has new data to report

To avoid loops or unnecessary updates on the client side, the message includes

an actor field stating whether the receiver of the message or some other

entity caused the change to happen.

actor value | Meaning |

|---|---|

| “self” | The receiver of the message caused the change |

| “other” | Another client caused the change |

| “system” | A change from within the system |

Format of the state-change message:

| Parameter | Description |

|---|---|

| type | Must be “state-change” |

| resource | The resource path that has changed |

| body | The fields that have changed within the resource |

| actor | The entity responsible for the change |

Below is an example state-change message where the client receiving the

message also caused the change to happen:

{

"type": "state-change",

"resource": "/audio/strips/3/compressor",

"actor": "self",

"body": {

"gain": 2.5,

"ratio": 4.0

}

}

state-add and state-remove

The state-add and state-remove messages are similar to the state-change

message. The top level resource field is the resource the client subscribes to.

In the body of the message there is another resource field telling what

sub-resource has been added or removed.

Examples:

{

"type": "state-add",

"resource": "/audio",

"actor": "system",

"body": {

"resource": "/strips/2"

}

}

{

"type": "state-remove",

"resource": "/audio",

"actor": "system",

"body": {

"resource": "/strips/2"

}

}

subscription-list and subscription-list-response

The subscription-list message is used to list own subscriptions. The response

message shows the client’s subscriptions, located under a given resource point

in the tree.

Required fields for subscription-list messages:

| Parameter | Description |

|---|---|

| type | Must be “subscription-list” |

| resource | The resource path to start looking for subscriptions from |

For instance:

{

"type": "subscription-list",

"resource": "/video"

}

The response is a subscription-list-response. A successful response contains a

body object with the current state of the resource:

{

"type": "subscription-list-response",

"resource": "/video",

"timestamp": 1731414140040000,

"body": {

"subscriptions": [

"/nodes/alpha_combine",

"/nodes/alpha_over"

]

}

}

The body has its root where the resource URI points.

An unsuccessful request has no body and contains an error message, for

instance:

{

"type": "subscription-list-response",

"resource": "/video/nodes/over",

"timestamp": 1731414382900000,

"error": "Failed to find resource with name 'over'"

}

Hop count

In a proxy-editing setup, i.e. when there is one pipeline behind another one,

subscription-list messages will stop at the first one, and the subscriptions

for that pipeline will be returned. This is applicable when using a Websocket

Control Panel.

When implementing a new control panel using the C++ SDK, set the hop count

to 1 for subscription-list messages.

describe and describe-response

The purpose of these messages is to get a description of some resource in the state tree, like a node in the video mixer component for example. The descriptions will list all commands and parameters which are valid for the resource.

Required fields for describe messages:

| Parameter | Description |

|---|---|

| type | Must be “describe” |

| resource | The path to resource to describe |

This is the description for /video/nodes/fade_to_black:

{

"type": "describe-response",

"resource": "/video/nodes/fade_to_black",

"timestamp": 1731480819600000,

"body": {

"children": [],

"commands": [

{

"command": "fade_from",

"optional_parameters": [],

"required_parameters": [...],

...

},

...

],

"parameters": [

...

]

}

}

Hop count

In a proxy-editing setup, i.e. when there is one pipeline behind another one,

describe messages will stop at the first receiver of the message. This is

applicable when using a Websocket Control Panel.

When implementing a new control panel using the C++ SDK, set the hop count

to 1 for describe messages.

sampling-start and sampling-start-response

The sampling-start message is used to start sampling a streaming parameter.

If successful, sampling-update messages will be sent to the subscriber

periodically at the specified time inteval.

Required parameters for sampling-start messages:

| Parameter | Description |

|---|---|

| type | Must be “sampling-start” |

| resource | An expression describing the streaming parameter(s) to start sampling |

| body/interval_ms | The update interval |

An asterisk (*) can be used at any level of the resource expression to

indicate “all components at this level”. If an asterisk is used, no other

characters are allowed at that level of the path.

For instance, "resource": "/audio/mixes/*/input_meter/*" can be used to start

sampling all streaming parameters under input_meter for all audio mixes.

The interval_ms parameter must be greater than or equal to 40.

Example:

{

"type": "sampling-start",

"resource": "/audio/mixes/*/input_meter/*",

"body": {

"interval_ms": 100

}

}

If there is an error, a sampling-start-response is returned containing an

error message. If the sampling-start was successful the response will

contain a result ok key/value.

sampling-stop and sampling-stop-response

The sampling-stop message is used to stop sampling a streaming parameter.

Required parameters for sampling-stop messages:

| Parameter | Description |

|---|---|

| type | Must be “sampling-stop” |

| resource | An expression used to start sampling one or more streaming parameters |

The resource parameter must match one used to start sampling exactly.

Example:

{

"type": "sampling-stop",

"resource": "/audio/mixes/*/input_meter/*"

}

If there is an error, a sampling-stop-response is returned containing an

error message. If the sampling-stop was successful the response will

contain a result ok key/value.

sampling-list and sampling-list-response

The sampling-list message is used to list the streaming parameter

subscriptions that are registered to the client sending the message.

Required parameters for sampling-stop messages:

| Parameter | Description |

|---|---|

| type | Must be “sampling-list” |

| resource | Must be “/” |

Example:

{

"type": "sampling-list",

"resource": "/"

}

If there is an error, a sampling-list-response is returned containing an

error message. If the sampling-stop was successful the response will

contain a list of all the sender’s streaming parameter subscriptions.

Example:

{

"type": "sampling-list-response",

"resource": "/",

"body": {

"samplings": [

"/audio/strips/1/pre_filter_meter/*",

"/audio/mixes/0/input_meter/*"

]

},

"timestamp": 1736860857880000

}

sampling-update

A sampling-update message describes the latest sampled state of one or more

streaming parameters matching a subscription. The message is sent periodically

to clients that have started subscriptions using sampling-start.

The message contains the resource expression used in sampling-start. If

necessary this can be used to identify the subscription in the client.

The body of the message contains the updated state for all of the streaming

parameters matching the subscription. Note that the body starts at the root of

the state tree, which is different from e.g. get responses or subscriptions

for non-streaming parameters.

Example:

{

"type": "sampling-update",

"resource": "/audio/strips/*/pre_filter_meter/*",

"body": {

"audio": {

"strips": {

"1": {

"pre_filter_meter": {

"peak": -0.439775225995602234

}

},

"2": {

"pre_filter_meter": {

"peak": -0.982362873895602837

}

}

}

}

},

"timestamp": 1736861180080000

}

event

When connected to a Websocket Control Panel it will act as a proxy in front of

the Rendering Engine. This introduces a message of type event, which is only

used between the Websocket Control Panel and its clients.

Messages of type event contain an event field specifying the type of event.

All clients connected to the Websocket Control Panel will receive events of type

connect whenever a new connection is established between the Websocket Control

Panel and a Rendering Engine. Such a message might look like:

{

"type": "event",

"connected_node": "<uuid>",

"address": "<uuid>:<uuid>:...",

"event": "connect"

}

where connected_node is the UUID of the newly connected node (Rendering Engine) and

address is a colon separated list of UUIDs, which is the address to the node that

discovered the connection. For connect events this is currently only the Websocket

Control Panel itself, meaning the address is always the Websocket Control Panel’s.

When a connection between a client and a Websocket Control Panel is established,

the client will receive connect events for all Rendering Engines that where

already connected to the Websocket Control Panel, to let the client know it

is connected to a production.

If the connection between the Websocket Control Panel and the Rendering Engine

breaks, whether it is disconnected on purpose or gets disconnected due to a network

outage, the Websocket Control Panel will send an event message to all its clients.

{

"type": "event",

"disconnected_node": "<uuid>",

"address": "<uuid>:<uuid>:...",

"event": "disconnect"

}

A similar message is also sent to the clients in case the connection between two

Rendering Engines/Pipelines is torn down or lost, such as between a Low Delay

and a High Quality Pipeline. In that case the address parameter will be the UUID

of the Websocket Control Panel followed by the UUID of the LD Pipeline and the

disconnected_node is the UUID of the HQ Pipeline.

4.2 - Rendering Engine components

This page describes the parameters and commands that is used for controlling the video mixer, audio mixer, HTML renderers and media players in the Ateliere Live Rendering Engine. This topic is closely related to this page on how to configure the rendering engine at startup.

Control command protocol

All commands to the Ateliere Live Rendering Engine are sent as human readable JSON objects and are listed below.

Each subsystem of the rendering engine has their own set of parameters and commands, and can be reached using:

/videofor the video mixer/audiofor the audio mixer (if using the built-in mixer)/ndi_audiofor the NDI audio mixer/htmlfor the html renderer instances/mediafor the media playback instances

Video mixer resources

Background

The video mixer is built as a tree graph of processing nodes, please see this page for further information on the video mixer node graph. The names of the nodes defined in the node graph are used as part of the resources: /video/nodes/{node_name} and they all have the parameter type which describes which type of video node it is.

There are 10 different node types.

- The

Transitionnode is used to pick which input slots to use for the program and preview output. The node also supports making transitions between the program and preview. - The

Selectnode simply forwards one of the available sources to its output. - The

Alpha combinenode is used to pick two input slots (or the same input slot) to copy the color from one input and combine it with some information from the other input to form the alpha. The color will just be copied from the color input frame, but there are several modes that can be used to produce the alpha channel in the output frame in different ways. This is known as “key & fill” in broadcasting. - The

Alpha overnode is used to perform an “Alpha Over” operation, that is to put the overlay video stream on top of the background video stream, and let the background be seen through the overlay depending on the alpha of the overlay. The node also features fading the graphics in and out by multiplying the alpha channel by a constant factor. - The

Transformnode takes one input stream and transforms it (scaling and translating) to one output stream. - The

Chroma Keynode takes one input stream, and by setting appropriate parameters for the keying, it will remove areas with the key color from the incoming video stream both affecting the alpha and color channels - The

Fade to blacknode takes one input stream, which it can fade to or from black gradually, and then outputs that stream. - The

Outputnode has one input stream and will output that stream out from the video mixer, back to the Rendering Engine. It has no control commands - The

Cropnode takes one input stream and can crop a video stream to a new size - The

Video delaynode takes one input stream and will hold the frames for a configured amount of time before forwarding the frames, causing a delay of the video output

To reset the runtime configuration of all video nodes to their default state use the reset command of the /video resource.

Transition

The transition node picks a program and a preview video source from the input slots and forward these to other nodes. The node also features auto transitions between the program and the preview sources. Some transition commands last over a duration of time, for example wipes. These can be performed either automatically or manually. The automatic mode works by the operator first selecting the type of transition, for instance a fade, setting the preview to the input slot to fade to and then trigger the transition at the right time with a auto command with the duration for the transition. In manual mode the exact position of the transition is set by the control panel by setting the factor parameter. This is used for implementing T-bars, where the T-bar repeatedly sends the current position of the bar. In the manual mode, the transition type is set before the transition begins, just as in the automatic mode. Note that an automatic transition will be overridden in case the transition position/factor is manually set, by interrupting the automatic transition and jumping to the manually set position.

resource: /video/nodes/{node name} type: transition

Parameters

| Name | Type | Access Mode | Default | Description |

|---|---|---|---|---|

| factor | float | read-write | 0 | The mix factor between the program and the preview input source, in the range 0.0 to 1.0. For example 0.3 means 30% transition from program to preview. The visible effect is dependent on the transition mode used. |

| mode | string | read-write | fade | The transition mode to use (fade, wipe_left, wipe_right) |

| preview | uint32 | read-write | 0 | The currently used input slot for the preview |

| program | uint32 | read-write | 0 | The currently used input slot for the program |

| type | string | read-only | transition | The video node type |

Example `set` message

Below is a JSON example that includes all writable parameters for this resource. A `set` message may have a subset of these parameters, so exclude the ones you don't need to set.

In the "resource" path, replace sections enclosed by braces "{}" with the name or id of the resource.

{

"type": "set",

"resource": "/video/nodes/{node name}",

"body": {

"factor": 0.0,

"mode": "fade",

"preview": 0,

"program": 0

}

}

Commands

auto

Start an auto transition with the currently selected transition type over a given time period

Parameters

| Name | Type | Required/optional | Description |

|---|---|---|---|

| duration_ms | uint32 | required | The duration in milliseconds of the automatic transition |

Command template

In the "resource" path, replace sections enclosed by braces "{}" with the name or id of the resource.

Replace parameter values, enclosed by "<>", according to their data type.

{

"type": "command",

"resource": "/video/nodes/{node name}",

"body": {

"command": "auto",

"parameters": {

"duration_ms": <uint32>

}

}

}

cut

Make a cut by swapping the program and preview inputs

Command template

In the "resource" path, replace sections enclosed by braces "{}" with the name or id of the resource.

{

"type": "command",

"resource": "/video/nodes/{node name}",

"body": {

"command": "cut"

}

}

Select

A node to select a video source from the input slots and send it on to the next node.

resource: /video/nodes/{node name} type: select

Parameters

| Name | Type | Access Mode | Default | Description |

|---|---|---|---|---|

| input | uint32 | read-write | 0 | Which input slot the video stream is currently picked from |

| type | string | read-only | select | The video node type |

Example `set` message

In the "resource" path, replace sections enclosed by braces "{}" with the name or id of the resource.

{

"type": "set",

"resource": "/video/nodes/{node name}",

"body": {

"input": 0

}

}

Alpha combine

A node to combine the color channels of one video stream with the alpha from another. This node is useful for video sources where the alpha channel is provided as a separate black and white video source that must be combined with the color source. The node supports multiple modes of obtaining the alpha, either by copying a specific color or alpha channel of some input slot, or by taking the average of the R, G and B channels of the video from some input slot.

resource: /video/nodes/{node name} type: alpha_combine

Parameters

| Name | Type | Access Mode | Default | Description |

|---|---|---|---|---|

| alpha | uint32 | read-write | 0 | The input slot to get the alpha input source from |

| color | uint32 | read-write | 0 | The input slot to get the color input source from |

| mode | string | read-write | average-rgb | The mode to use for combining the color and alpha input sources (copy-r, copy-g, copy-b, copy-a, average-rgb) |

| type | string | read-only | alpha_combine | The video node type |

Example `set` message

Below is a JSON example that includes all writable parameters for this resource. A `set` message may have a subset of these parameters, so exclude the ones you don't need to set.

In the "resource" path, replace sections enclosed by braces "{}" with the name or id of the resource.

{

"type": "set",

"resource": "/video/nodes/{node name}",

"body": {

"alpha": 0,

"color": 0,

"mode": "average-rgb"

}

}

Alpha over

A node to combine two video streams using alpha over compositing, overlaying the foreground stream on the background stream. The node will keep the transparency of both layers. The overlay stream can be faded in and out of the background stream.

resource: /video/nodes/{node name} type: alpha_over

Parameters

| Name | Type | Access Mode | Default | Description |

|---|---|---|---|---|

| factor | float | read-write | 0 | The compositing factor. Range 0.0 to 1.0, where 0.0 means that the overlay is not composited on to the background and 1.0 means the overlay is fully visible on top of the background input. |

| type | string | read-only | alpha_over | The video node type |

Example `set` message

In the "resource" path, replace sections enclosed by braces "{}" with the name or id of the resource.

{

"type": "set",

"resource": "/video/nodes/{node name}",

"body": {

"factor": 0.0

}

}

Commands

fade_from

Fade away the overlay over a given time period

Parameters

| Name | Type | Required/optional | Description |

|---|---|---|---|

| duration_ms | uint32 | required | The duration of the automatic transition in milliseconds. |

Command template

In the "resource" path, replace sections enclosed by braces "{}" with the name or id of the resource.

Replace parameter values, enclosed by "<>", according to their data type.

{

"type": "command",

"resource": "/video/nodes/{node name}",

"body": {

"command": "fade_from",

"parameters": {

"duration_ms": <uint32>

}

}

}

fade_to

Fade to fully visible overlay over a given time period

Parameters

| Name | Type | Required/optional | Description |

|---|---|---|---|

| duration_ms | uint32 | required | The duration of the automatic transition in milliseconds. |

Command template

In the "resource" path, replace sections enclosed by braces "{}" with the name or id of the resource.

Replace parameter values, enclosed by "<>", according to their data type.

{

"type": "command",

"resource": "/video/nodes/{node name}",

"body": {

"command": "fade_to",

"parameters": {

"duration_ms": <uint32>

}

}

}

Transform

A node to transform an incoming video stream, by scaling and transposing it. The canvas size of the input will be kept and all surrounding area in case the source video is shrunk, is filled with transparent black.

resource: /video/nodes/{node name} type: transform

Parameters

| Name | Type | Access Mode | Default | Description |

|---|---|---|---|---|

| scale | float | read-write | 1 | The relative scale of the video stream. Use 1.0 for original scale. |

| type | string | read-only | transform | The video node type |

| x | float | read-write | 0 | The X position of the upper left corner of the image as a fraction of the canvas’ width. For example use 0.0 to snap it to the left edge, or 0.5 to the center of the image |

| y | float | read-write | 0 | The Y position of the upper left corner of the image as a fraction of the canvas’ height. For example use 0.0 to snap it to the top edge, or 0.5 to the center of the image |

Example `set` message

Below is a JSON example that includes all writable parameters for this resource. A `set` message may have a subset of these parameters, so exclude the ones you don't need to set.

In the "resource" path, replace sections enclosed by braces "{}" with the name or id of the resource.

{

"type": "set",

"resource": "/video/nodes/{node name}",

"body": {

"scale": 1.0,

"x": 0.0,

"y": 0.0

}

}

Chroma key

A node to perform chroma keying on an incoming video stream. The output video stream will have the alpha and possibly the color channels modified, according to the parameter values in this node. To remove a color from the incoming video stream, first enable the node and then select the key color to remove. The key color can be selected in two ways, either by manually setting the color with the R, G and B channel values, or by using the color picker. When using the color picker, the color picker command will define the position and size of the color picker square to sample the incoming video stream. The R, G and B color parameters will be updated according to the average color of the area when the command was received by the Rendering Engine. The currently selected color can be shown in the upper left hand corner in the output video stream of the node by setting the parameter show_key_color to true. Also, the latest sampled color picker area can be drawn in the node’s output by setting show_color_picker to true. When a suitable color has been chosen, adjust the distance and falloffparameters to get a clear mask. To aid the tweaking of the parameters, set the show_alpha parameter to true. This will make the node output the black and white mask instead of the keyed result, which makes it easier to see which parts are masked away and not. Remember to turn this off before going on air. As a last step, any remaining fringes of the key color around the subject can be desaturated with the color_spill parameter. But remember this will desaturate colors close to the key color even in parts of the frame fully visible.

resource: /video/nodes/{node name} type: chroma_key

Parameters

| Name | Type | Access Mode | Default | Description |

|---|---|---|---|---|

| color_spill | float | read-write | 0.1 | Desaturation factor of colors that are close to the key color, without changing the alpha. Range 0.0 to 1.0, where 0.0 keeps the current saturation. |

| distance | float | read-write | 0.1 | The maximum deviation from the selected key color that is also considered part of the color to mask away. Range 0.0 to 1.0, where 0.0 means only the exact key color will be removed and greater values means more colors further away from the key color are removed. |

| enabled | bool | read-write | false | When set to true the node will be enabled, false will just bypass the node. |

| falloff | float | read-write | 0.08 | The falloff factor used to smooth out the edge in the mask between which colors are fully removed and which are fully kept, by making the colors in between semi-transparent. Range 0.0 to 1.0, where 0.0 means sharp edges. |

| show_alpha | bool | read-write | false | Switch on to show the resulting alpha channel as output instead of the keyed result, useful to easier see which parts are masked away and which are not. Make sure to turn this off before going on air. |

| show_color_picker | bool | read-write | false | Controls the visibility of the color picker area in the output video. The marker will show the latest sampled area in the video stream. Make sure to turn this off before going on air. |

| show_key_color | bool | read-write | false | Controls the visibility of the currently used key color as a small square in the upper left corner of the image. Make sure to turn this off before going on air. |

| type | string | read-only | chroma_key | The video node type |

Example `set` message

Below is a JSON example that includes all writable parameters for this resource. A `set` message may have a subset of these parameters, so exclude the ones you don't need to set.

In the "resource" path, replace sections enclosed by braces "{}" with the name or id of the resource.

{

"type": "set",

"resource": "/video/nodes/{node name}",

"body": {

"color_spill": 0.1,

"distance": 0.1,

"enabled": false,

"falloff": 0.08,

"show_alpha": false,

"show_color_picker": false,

"show_key_color": false

}

}

Commands

pick_color

Given a size and location, pick a color in the current video frame. The picked color will be the average color in the square defined by the parameters.

Parameters

| Name | Type | Required/optional | Description |

|---|---|---|---|

| size | uint32 | required | Size in pixels of the color picker square |

| x | float | required | X position of the center of the color picker square as a fraction of the frame’s width. Range 0.0 to 1.0 |

| y | float | required | Y position of the center of the color picker square as a fraction of the frame’s width. Range 0.0 to 1.0 |

Command template

In the "resource" path, replace sections enclosed by braces "{}" with the name or id of the resource.

Replace parameter values, enclosed by "<>", according to their data type.

{

"type": "command",

"resource": "/video/nodes/{node name}",

"body": {

"command": "pick_color",

"parameters": {

"size": <uint32>,

"x": <float>,

"y": <float>

}

}

}

Chroma key / Key color

The key color

resource: /video/nodes/{node name}/key_color type: chroma_key

Parameters

| Name | Type | Access Mode | Default | Description |

|---|---|---|---|---|

| b | float | read-write | 0 | The blue channel, in range 0.0 to 1.0 |

| g | float | read-write | 0 | The green channel, in range 0.0 to 1.0 |

| r | float | read-write | 0 | The red channel, in range 0.0 to 1.0 |

Example `set` message

Below is a JSON example that includes all writable parameters for this resource. A `set` message may have a subset of these parameters, so exclude the ones you don't need to set.

In the "resource" path, replace sections enclosed by braces "{}" with the name or id of the resource.

{

"type": "set",

"resource": "/video/nodes/{node name}/key_color",

"body": {

"b": 0.0,

"g": 0.0,

"r": 0.0

}

}

Fade to black

A node to fade the incoming video stream to and from black.

resource: /video/nodes/{node name} type: fade_to_black

Parameters

| Name | Type | Access Mode | Default | Description |

|---|---|---|---|---|

| factor | float | read-write | 0 | The factor, where 1.0 means the output will be fully black and 0.0 means the input will be passed through unmodified. |

| type | string | read-only | fade_to_black | The video node type |

Example `set` message

In the "resource" path, replace sections enclosed by braces "{}" with the name or id of the resource.

{

"type": "set",

"resource": "/video/nodes/{node name}",

"body": {

"factor": 0.0

}

}

Commands

fade_from

Fade from a fully black frame to the input video stream over a given time period

Parameters

| Name | Type | Required/optional | Description |

|---|---|---|---|

| duration_ms | uint32 | required | The duration of the automatic transition in milliseconds. |

Command template

In the "resource" path, replace sections enclosed by braces "{}" with the name or id of the resource.

Replace parameter values, enclosed by "<>", according to their data type.

{

"type": "command",

"resource": "/video/nodes/{node name}",

"body": {

"command": "fade_from",

"parameters": {

"duration_ms": <uint32>

}

}

}

fade_to

Fade to a fully black frame over a given time period

Parameters

| Name | Type | Required/optional | Description |

|---|---|---|---|

| duration_ms | uint32 | required | The duration of the automatic transition in milliseconds. |

Command template

In the "resource" path, replace sections enclosed by braces "{}" with the name or id of the resource.

Replace parameter values, enclosed by "<>", according to their data type.

{

"type": "command",

"resource": "/video/nodes/{node name}",

"body": {

"command": "fade_to",

"parameters": {

"duration_ms": <uint32>

}

}

}

Crop

A node to crop the incoming video stream. The node can crop the left, right, top and bottom edge of the incoming video stream. The areas outside of the cropped area will be transparent in the output.

resource: /video/nodes/{node name} type: crop

Parameters

| Name | Type | Access Mode | Default | Description |

|---|---|---|---|---|

| bottom | float | read-write | 1 | Position of the bottom crop edge, in percent of the image’s height |

| left | float | read-write | 0 | Position of the left crop edge, in percent of the image’s width |

| right | float | read-write | 1 | Position of the right crop edge, in percent of the image’s width |

| top | float | read-write | 0 | Position of the top crop edge, in percent of the image’s height |

| type | string | read-only | crop | The video node type |

Example `set` message

Below is a JSON example that includes all writable parameters for this resource. A `set` message may have a subset of these parameters, so exclude the ones you don't need to set.

In the "resource" path, replace sections enclosed by braces "{}" with the name or id of the resource.

{

"type": "set",

"resource": "/video/nodes/{node name}",

"body": {

"bottom": 1.0,

"left": 0.0,

"right": 1.0,

"top": 0.0

}

}

Video delay

A node to delay the video stream a given number of frames.

resource: /video/nodes/{node name} type: video_delay

Parameters

| Name | Type | Access Mode | Default | Description |

|---|---|---|---|---|

| delay | uint32 | read-write | 0 | The number of frames to delay the video |

| type | string | read-only | video_delay | The video node type |

Example `set` message

In the "resource" path, replace sections enclosed by braces "{}" with the name or id of the resource.

{

"type": "set",

"resource": "/video/nodes/{node name}",

"body": {

"delay": 0

}

}

Audio mixer resources

Overview

The audio mixer has the following data path:

The three main elements of the audio mixer are the Input strips, the Mixes, and the Outputs.

Input strips

Audio enters the audio mixer through the input strips. When a strip has been created, the audio for the strip is selected among the available channels in the Rendering Engine’s input slots.

In the example picture above, three sources are connected to the input slots. The slots have four, two, and six channels respectively. In the illustration we can see that the first strip uses a single channel from the first slot, the second strip uses channel three and four from the second slot and so on. Several input strips may select the same input slot channels.

Each input strip has two stereo output connection points ( ), one

before the volume fader (pre fader) and one after the volume fader (post fader). When a mix or

an output is connected to the output of a strip, which of these two locations to take the audio from

can be selected using the

), one

before the volume fader (pre fader) and one after the volume fader (post fader). When a mix or

an output is connected to the output of a strip, which of these two locations to take the audio from

can be selected using the origin parameter.

Mixes

Mixes are used to combine the output from input strips, or other mixes, and also allows for further

filtering of the audio signal. When a mix has been created, the input strips and other mixes contributing